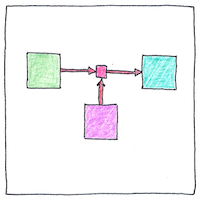

Claude Shannon

telecommunication

|

Information theory

Information is symbol sequences (like these syllables) that can be counted, reduced to zeros and ones, stored, and communicated without regard for meaning or importance. Claude Shannon figured an arrangement of switches could resolve any logical or arithmetic problem. He laid down essential limits on information storage, transmission, and processing.

Information entropy

The cost of writing information is, minimum, one digital bit for a true or false. In practice, much more than that. How many interviews, how many reviews to make a few improvements to a manual? How many times must I repeat myself?

Success of English

English pronunciations and spellings are irrational and weird, idioms and euphemisms are mysterious, but English has succeeded world-wide because it is the one language that you may butcher, mangle, and contort freely, and still be understood—the ideal language for poetry.

One can say a lot about information without concern for meaning or importance. Even a poet concerned mainly about meaning might count syllables. Claude Shannon’s ideas contributed to many fields including digital circuit design, data compression, sampling theory, cryptography, artificial intelligence, natural-language processing, genetics, and game theory.

See also in The book of science:

Readings in wikipedia: